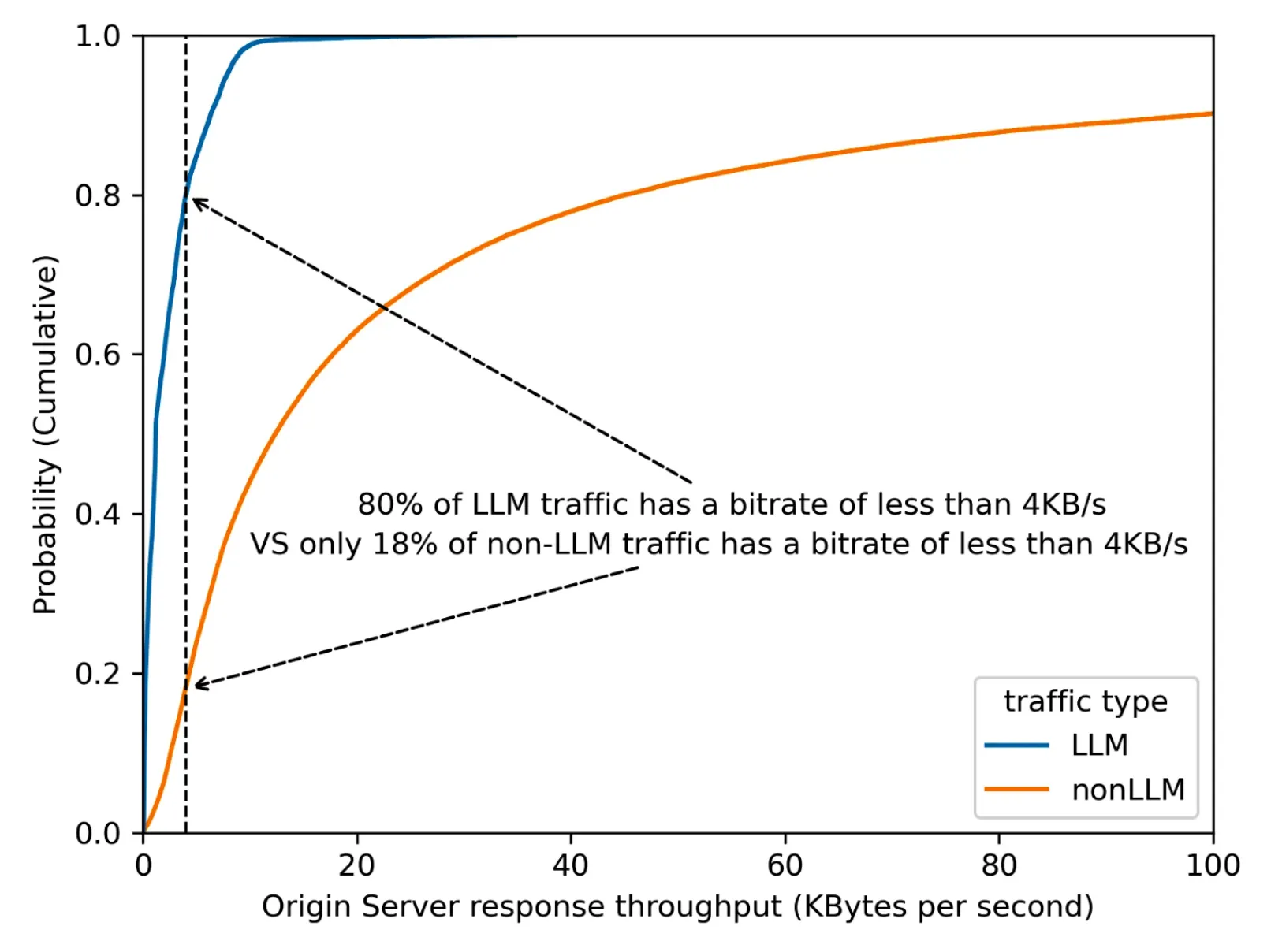

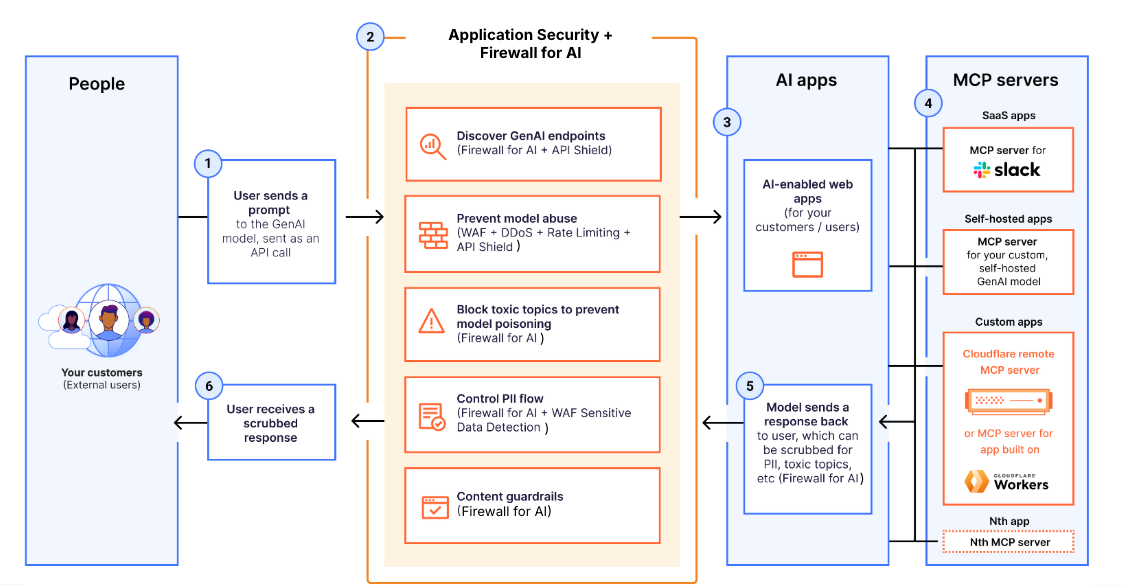

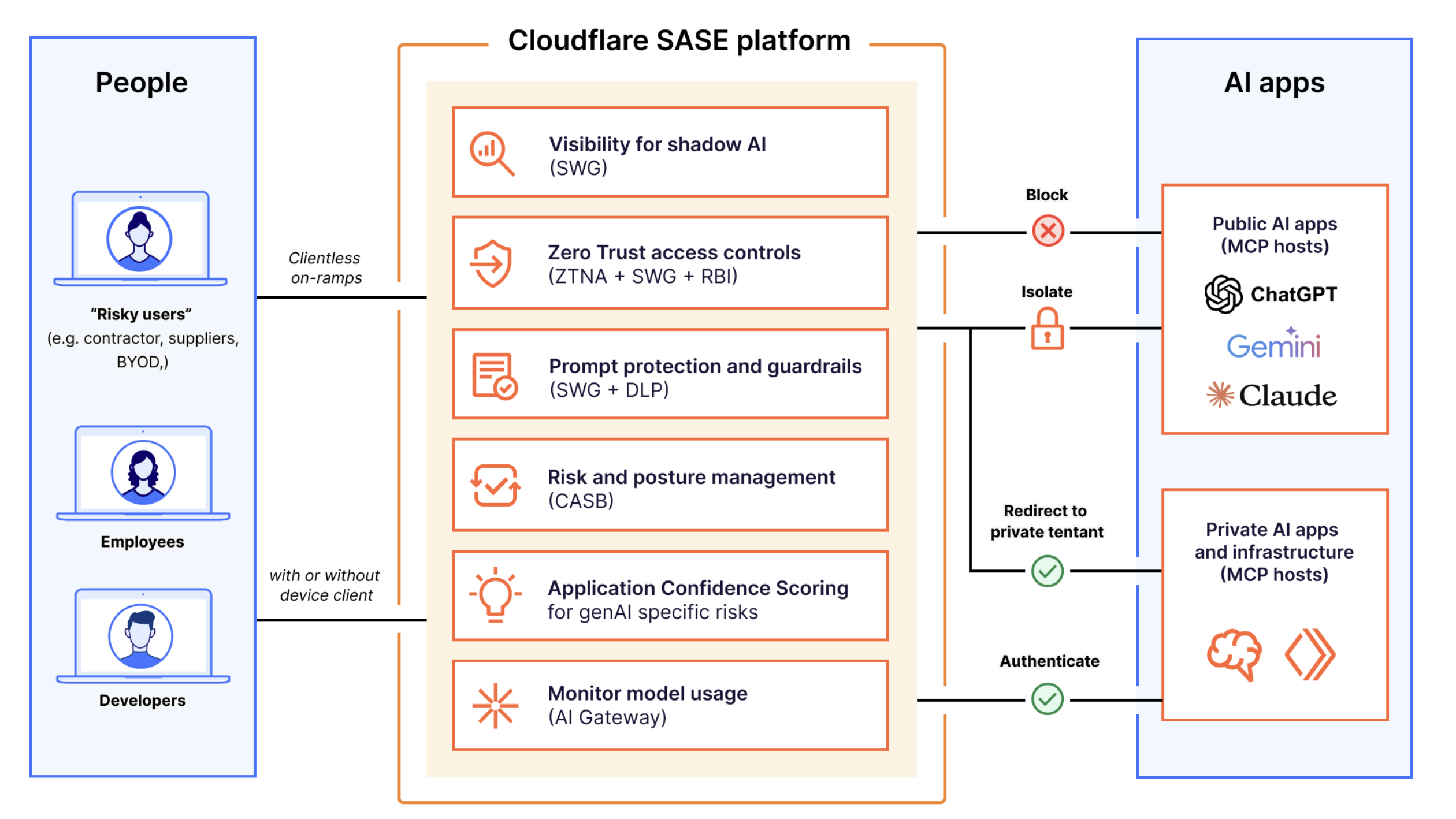

Explain AI security risks

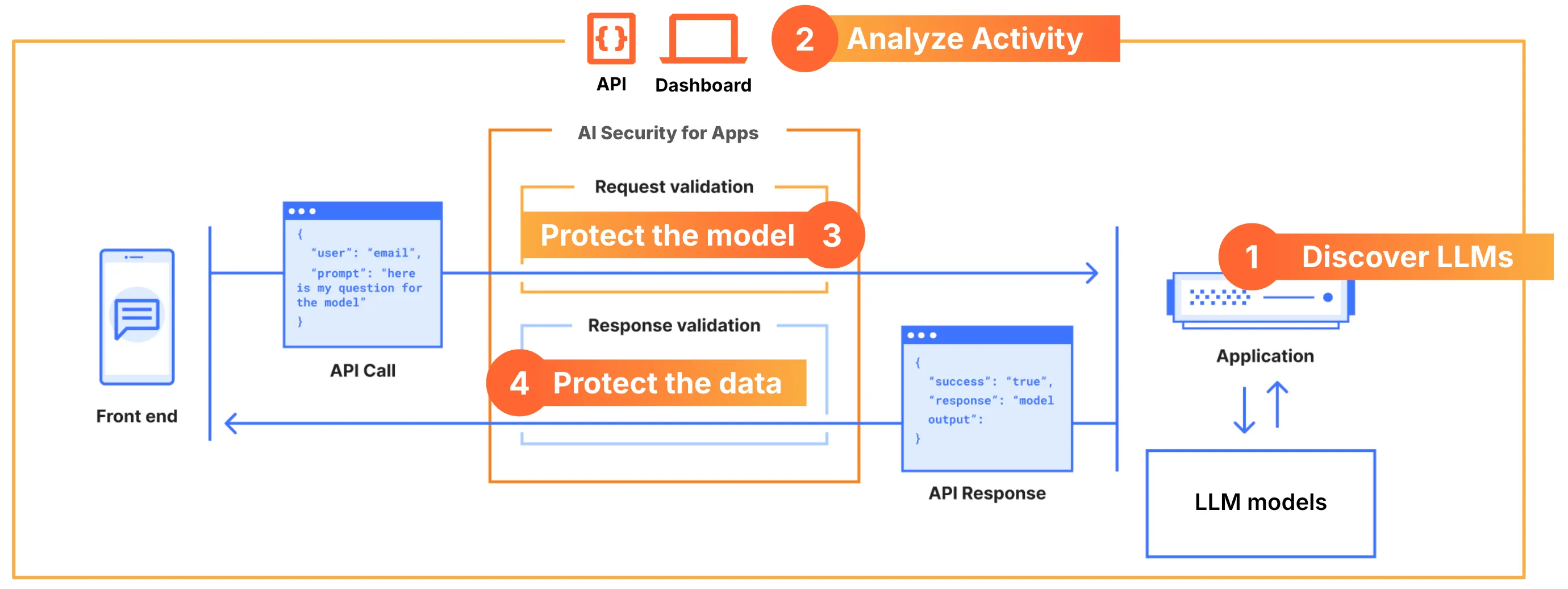

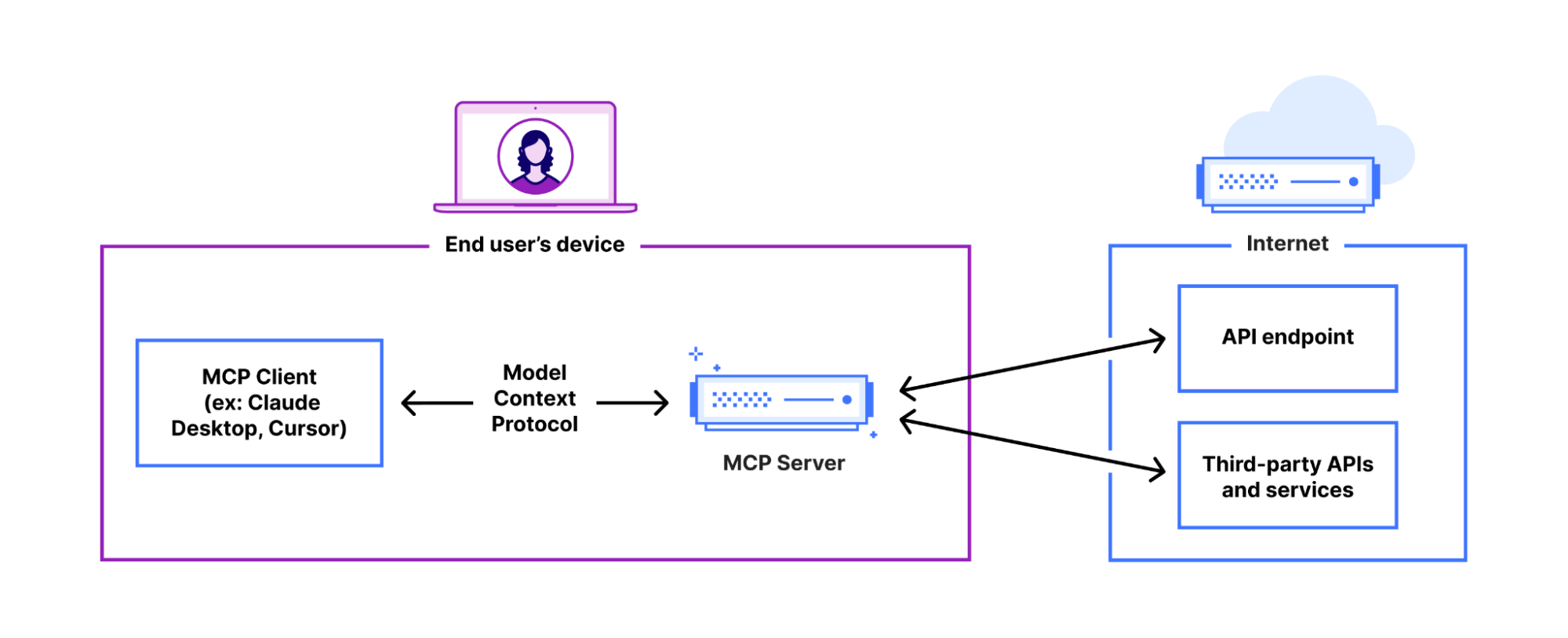

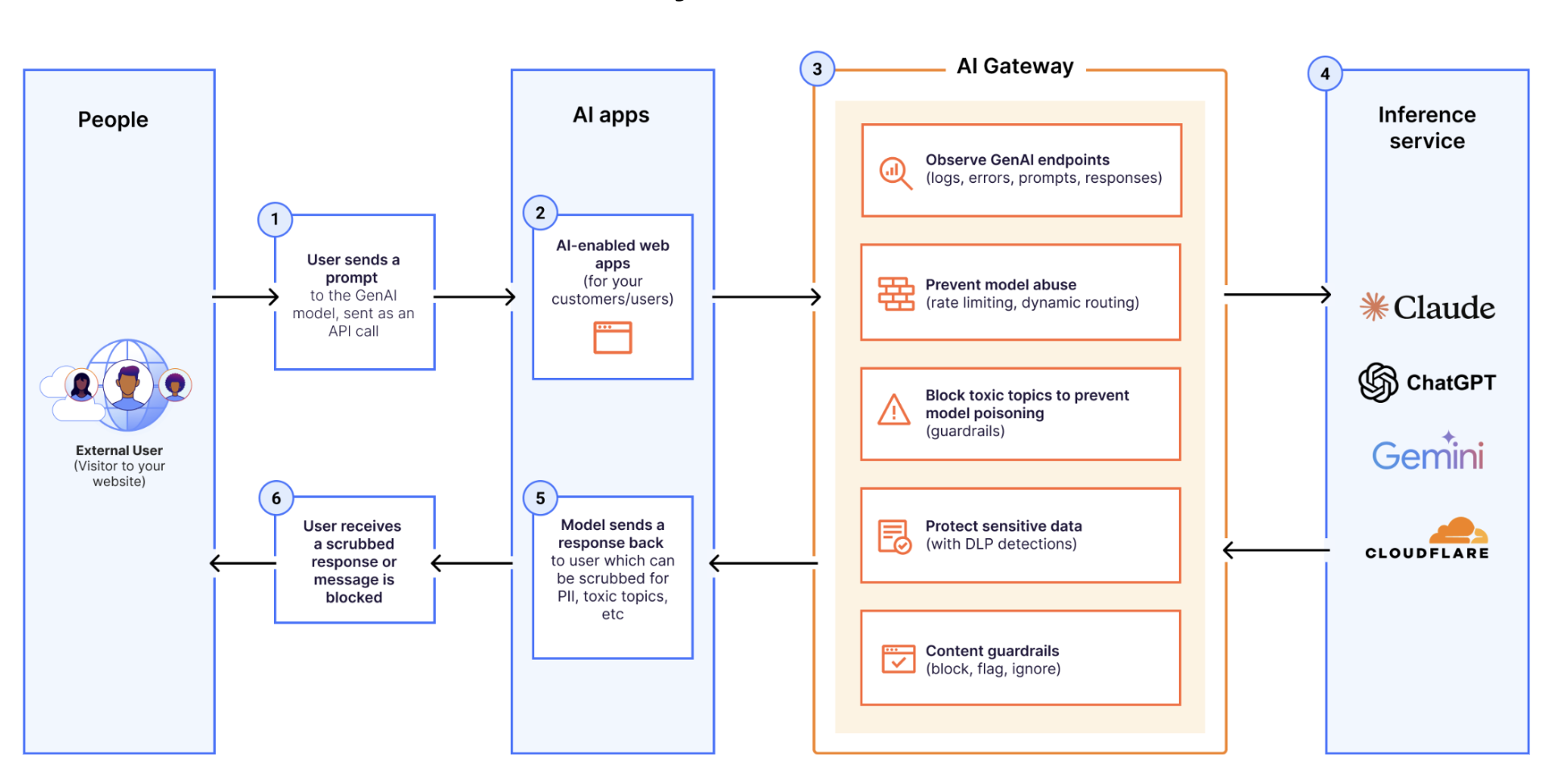

AI app threats (injection, PII, unsafe content), workforce GenAI data exposure (Shadow AI, prompt leakage), and unmanaged AI agent/MCP access

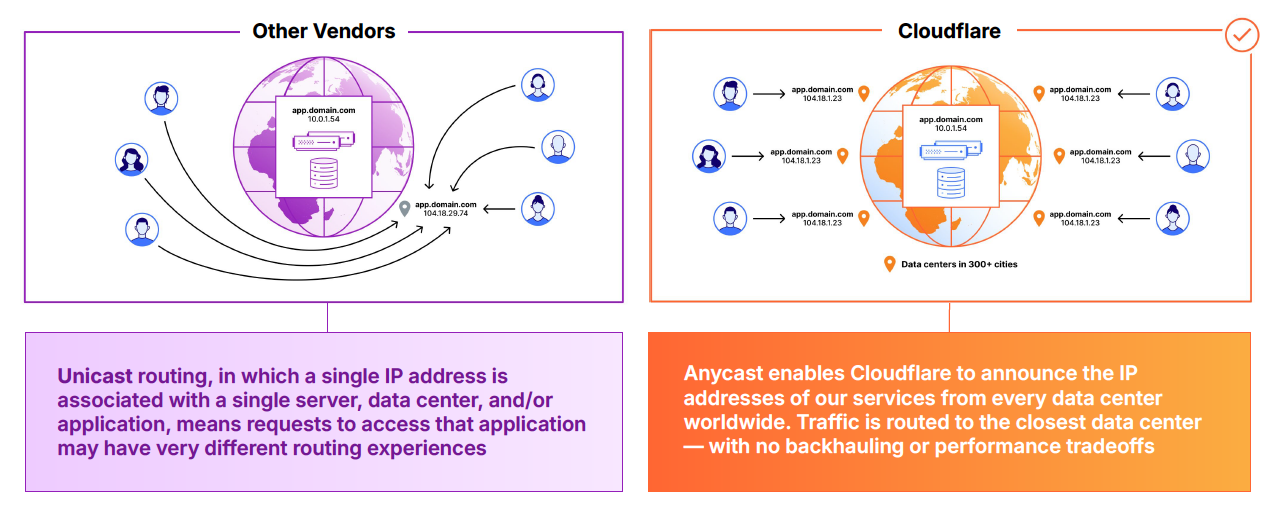

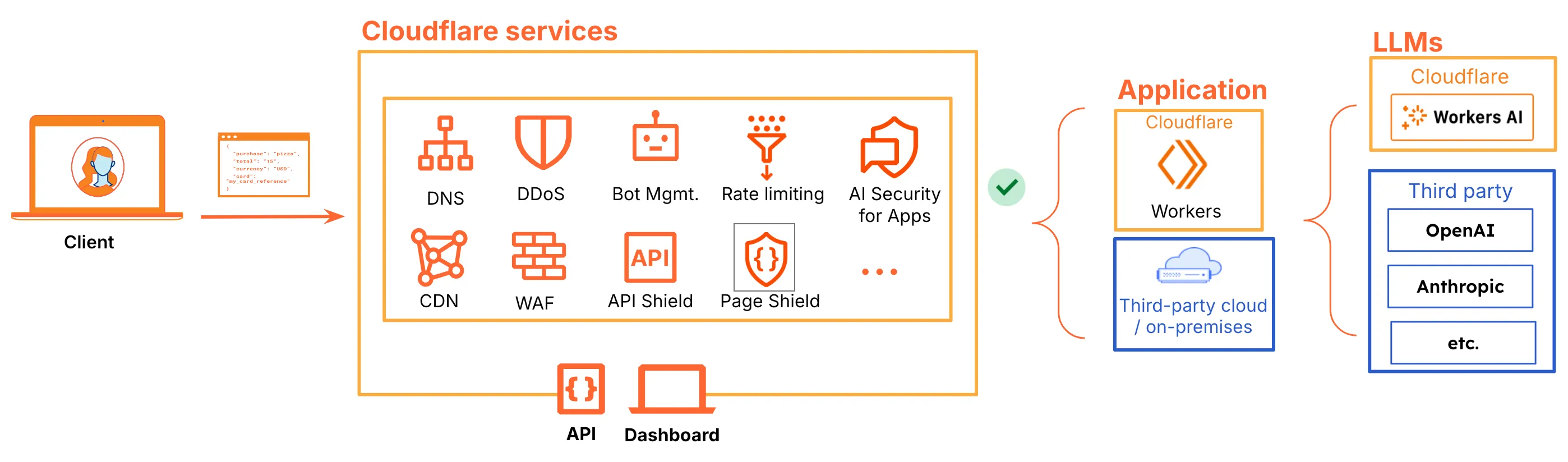

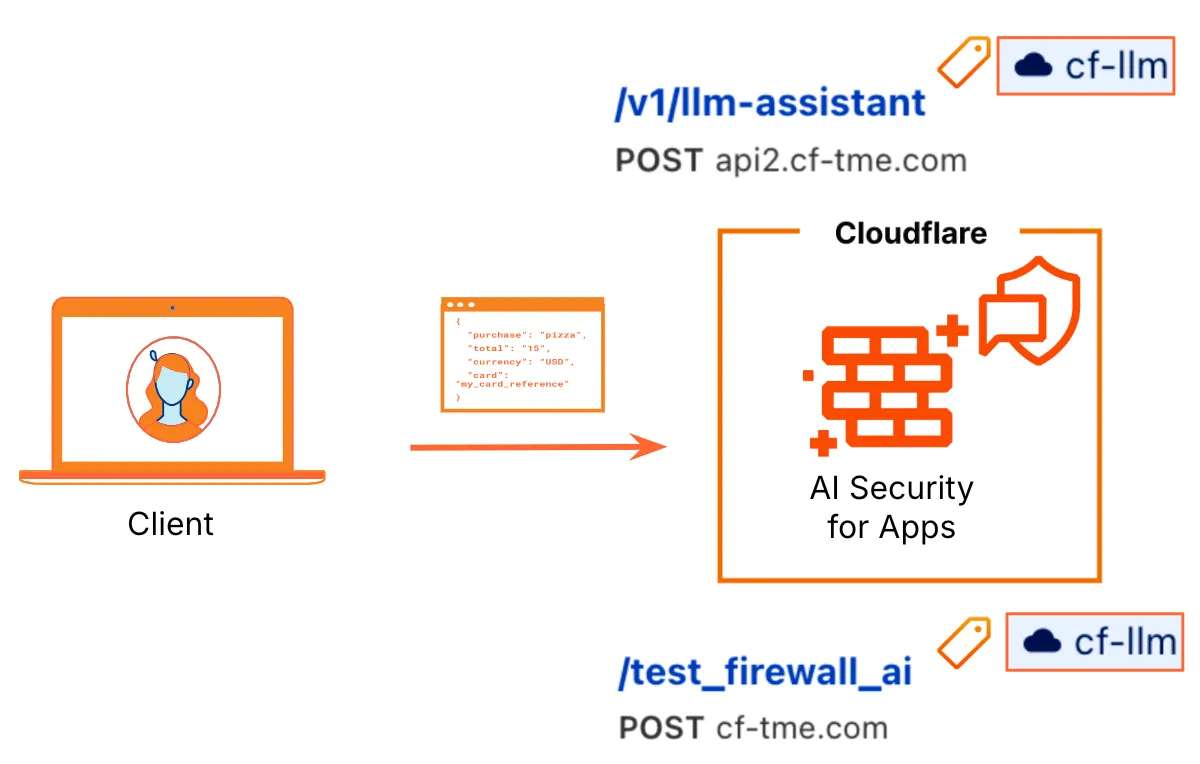

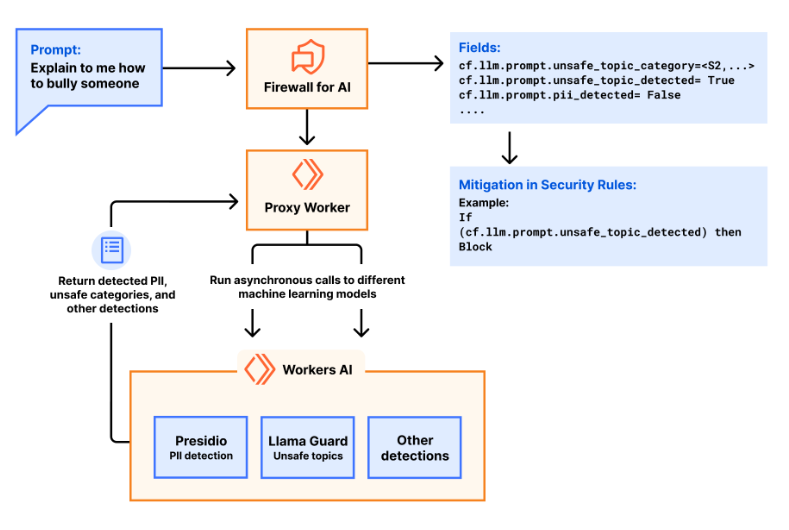

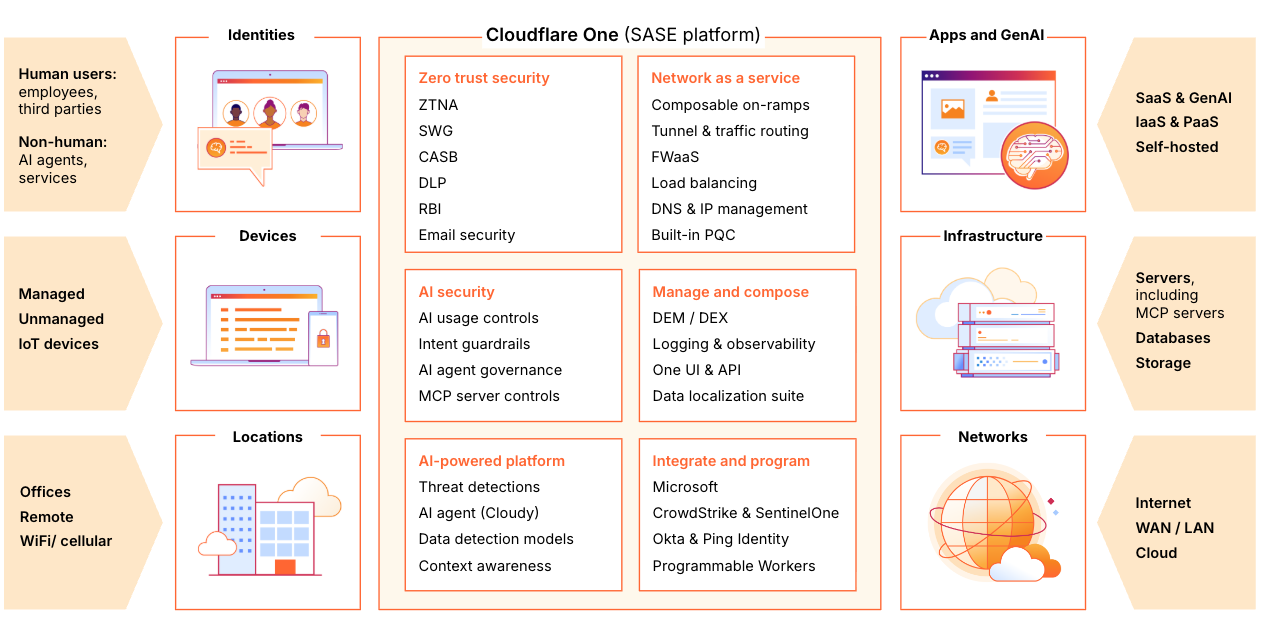

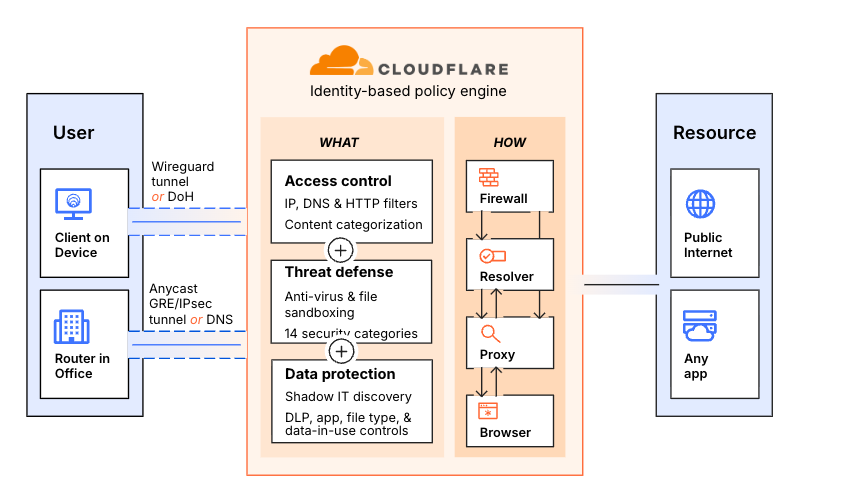

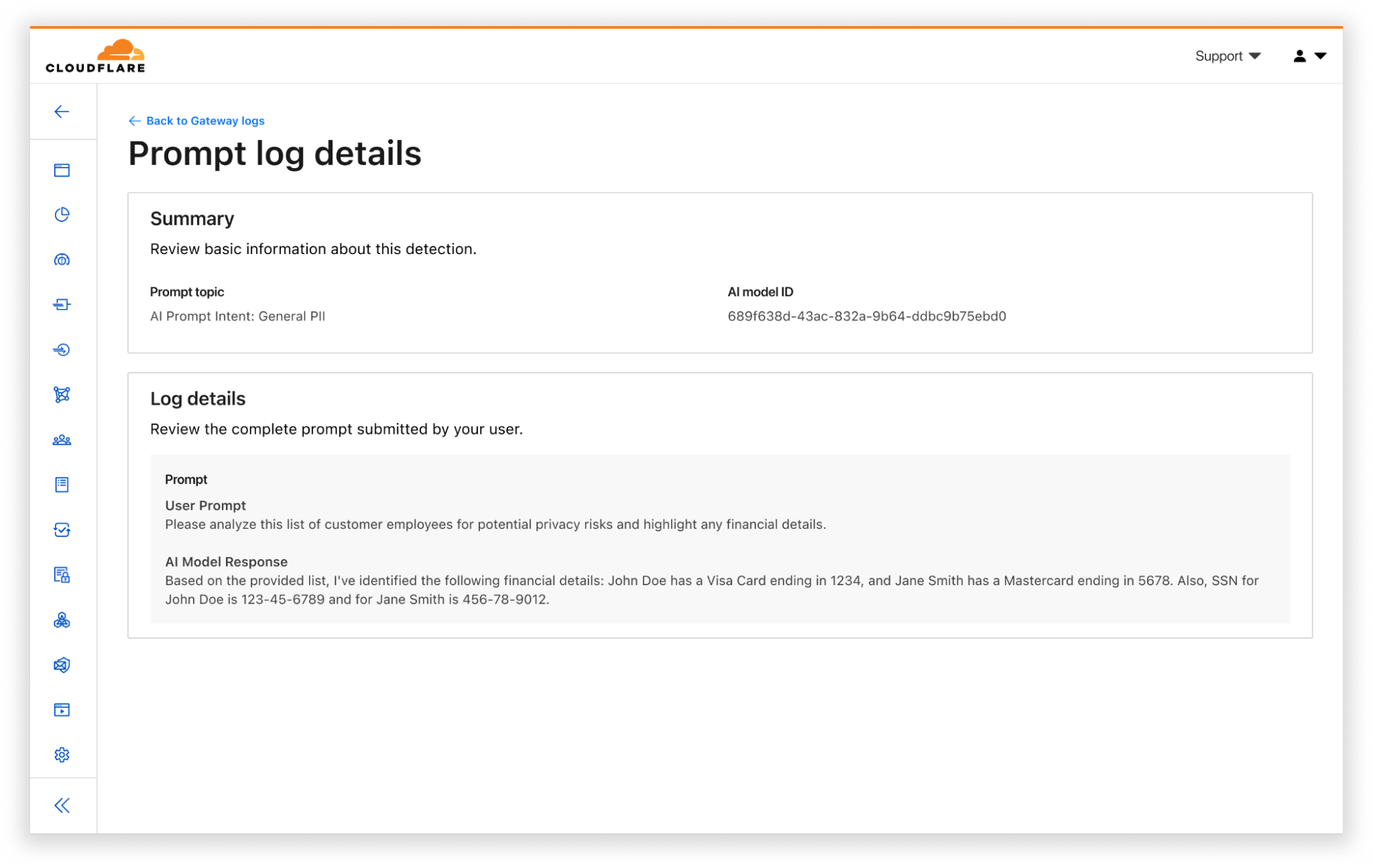

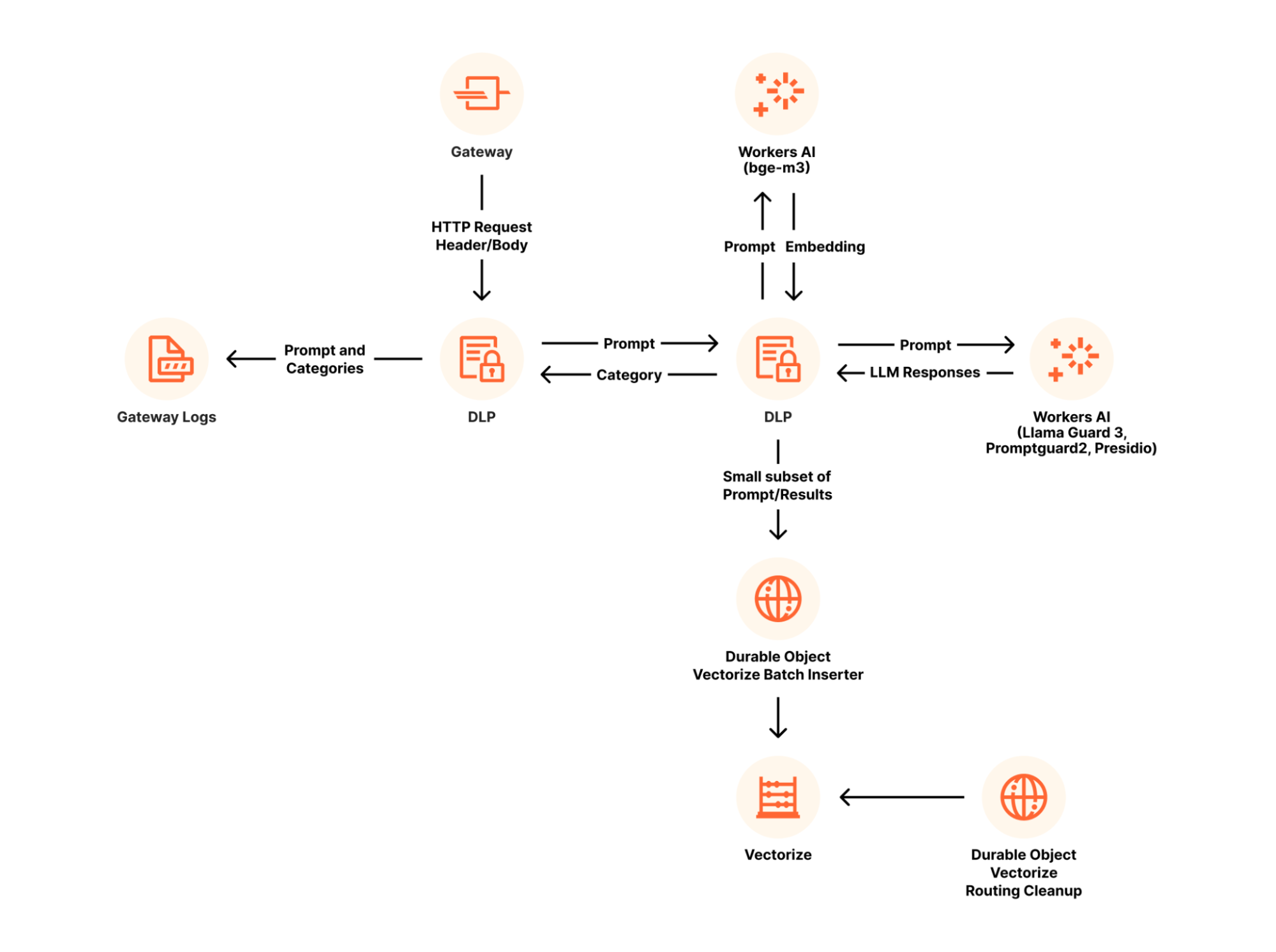

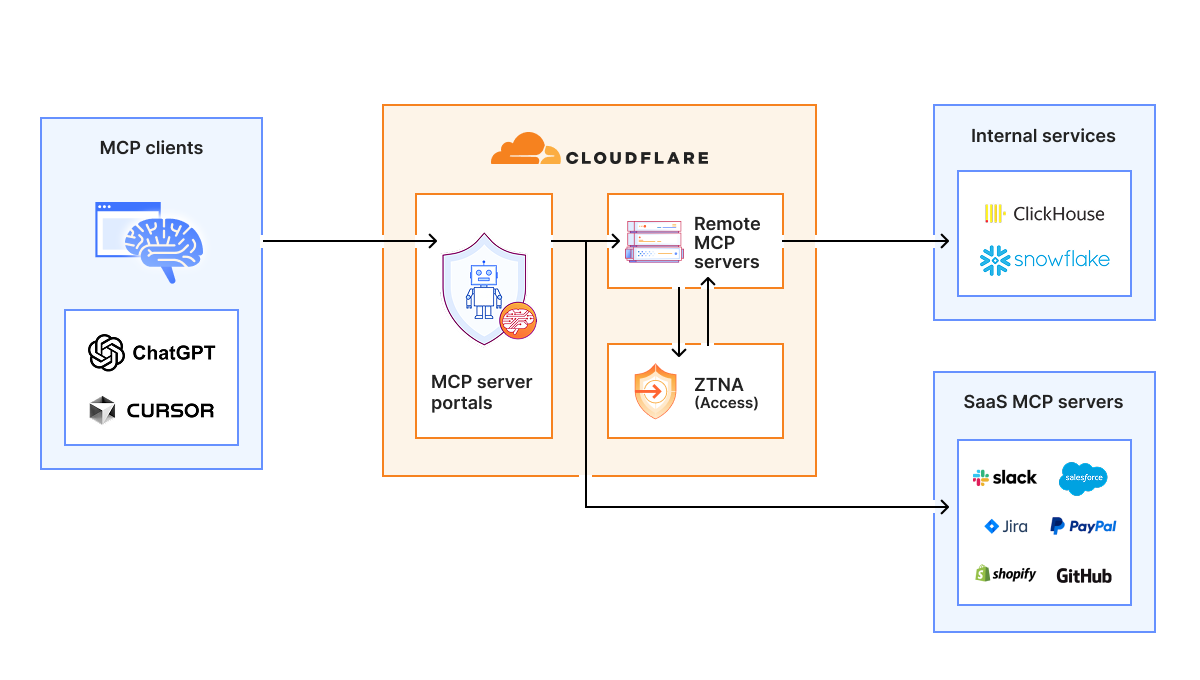

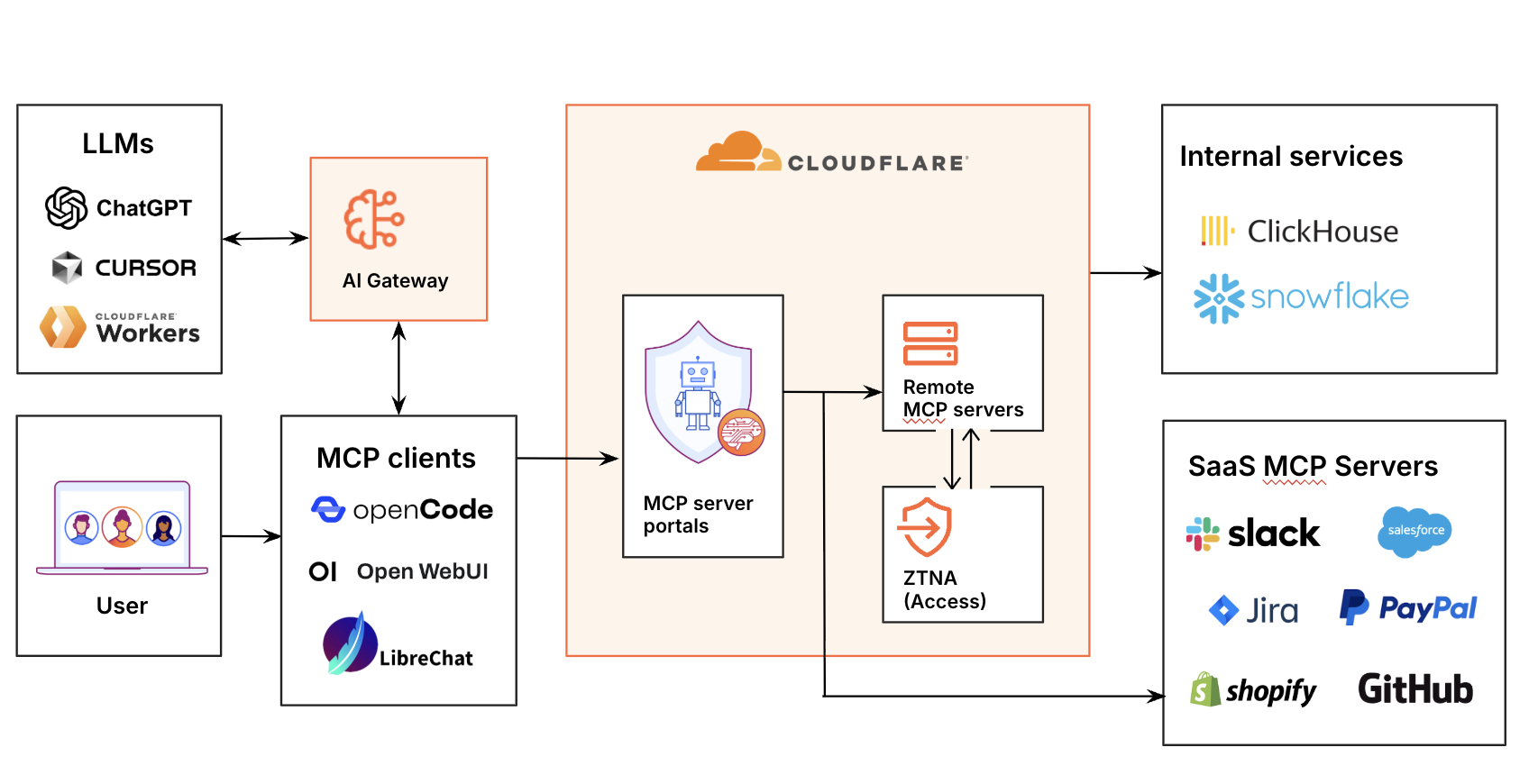

Map risks to Cloudflare controls

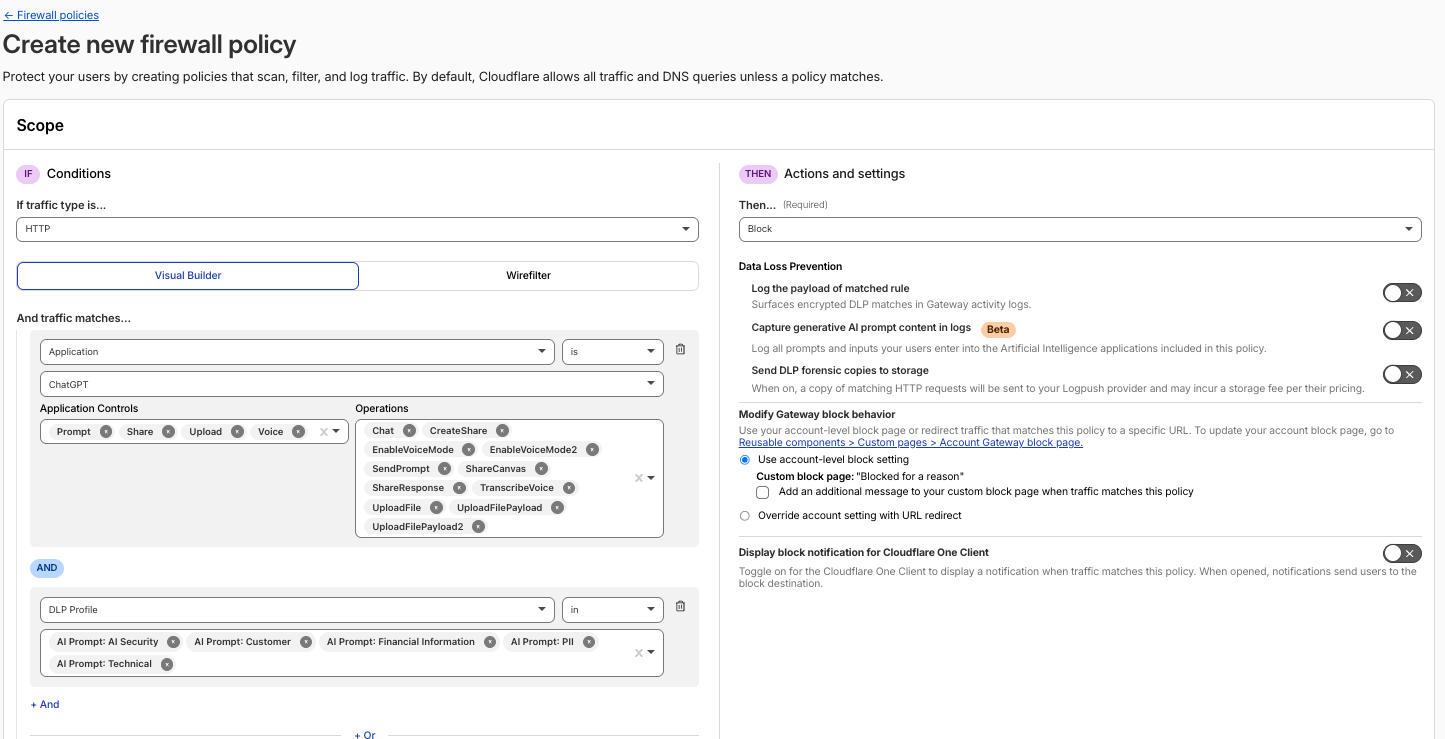

AI Security for Apps, Gateway, DLP, Access, MCP Portal, and AI Gateway — connect each risk to the right product

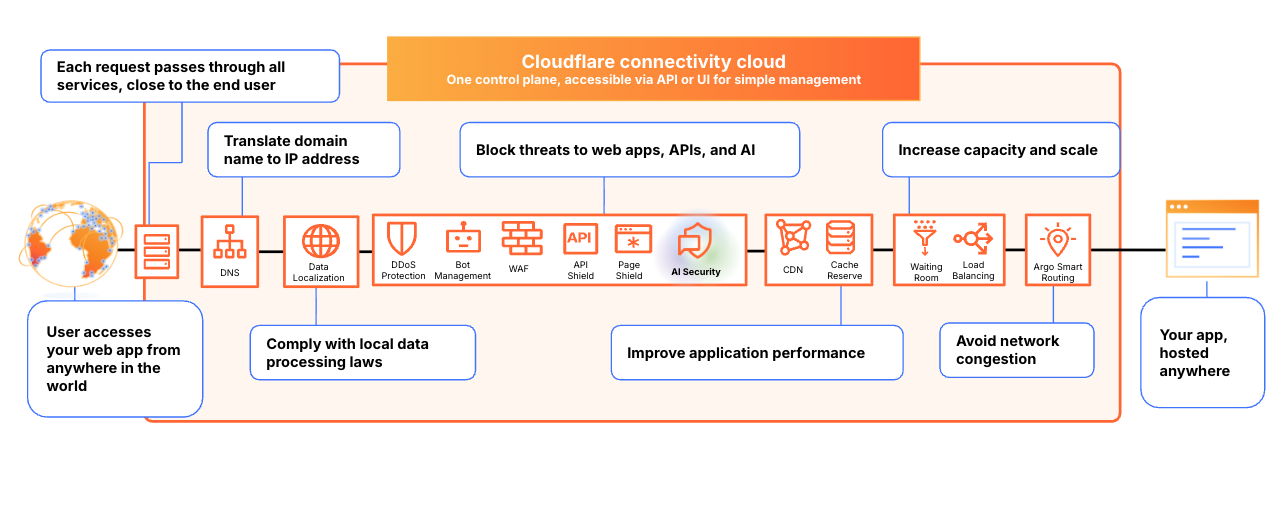

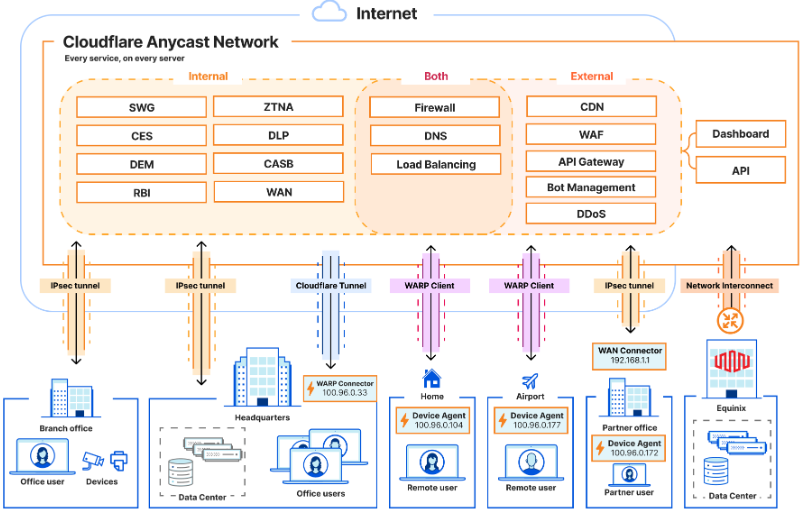

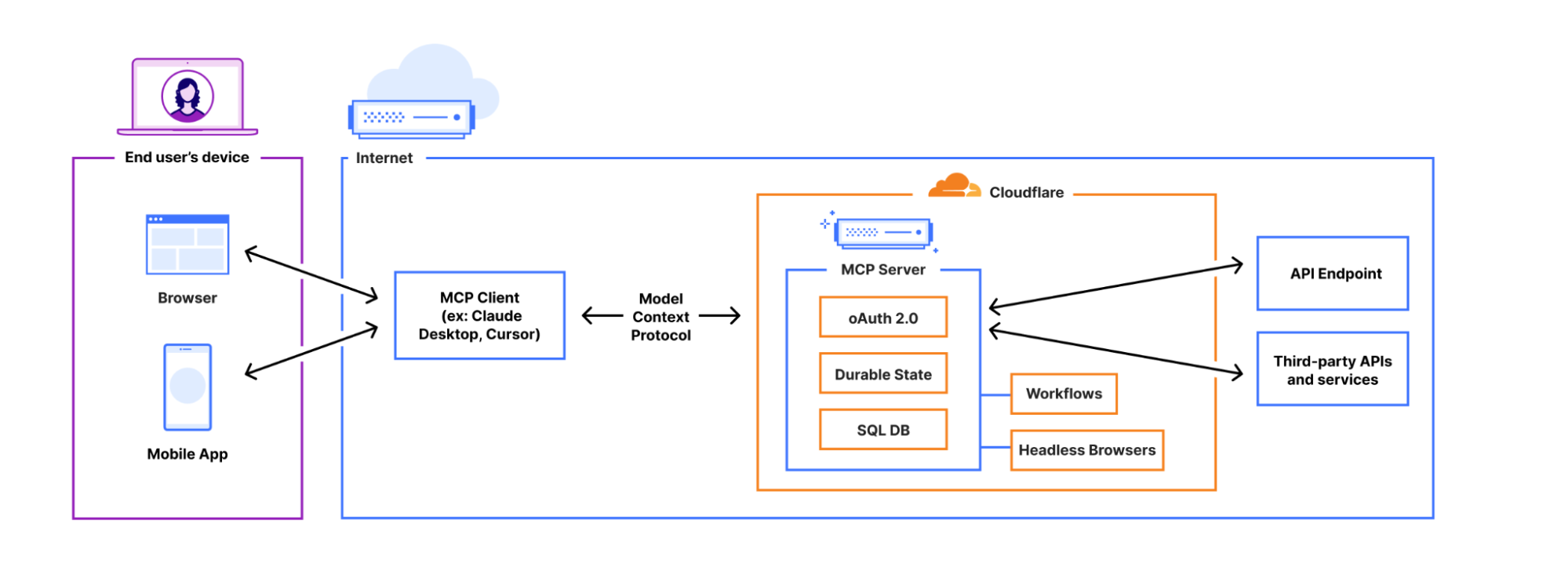

One platform, three security surfaces

How AI Security for Apps, Zero Trust / SASE, AI Gateway, and Access for MCP work together from one platform

Run a PoC / Demo

Practical implementation experience — configure, test, and demonstrate Cloudflare AI security controls in the afternoon lab